Computational Governance Platform

Computational Governance Platform enables a true shift left of data governance within the software and data development processes.

It allows the platform team to create, evolve and enforce computational policies and metrics. That means, data governance is no longer just guidelines, it is enforced automatically through code and are not bypassable.

You build it, you govern it!

By leveraging the Computational Governance Platform, the Data Engineering teams are becoming accountable for respecting and being compliant with governance pillars, by means of policies and metrics checks.

Context-aware

Governance Entities should be documented, accessible and self-explanatory. A good policy should explain " why" it exists, and what the consequences and trade-offs are behind it. All Metrics and Policies managed by the Computational Governance Platform will always be describing their purpose and perimeter of execution, by design.

Governance Entities

Before exploring the functionalities of this powerful platform, it is important to introduce the main concept for the Computational Governance Platform, that is the Governance Entity.

A Governance Entity is a piece of code that must be executed autonomously and independently of the state of a resource. Therefore, it is essential that it has its own independent execution context.

In general, the characteristics of a computational policy should be the following:

- Transparent and self-describing

- Defined and managed as code

- Executable at any time

- Not bypassable

- Impartial

- Generating standard results

- Have a clear goal and status

The Computational Governance Platform manages two kind of entities: Policies and Metrics. The distinction point is mainly due to the type of results that they generate when asked to evaluate a resource.

- Policy: It always responds to evaluations requests with compliant / not compliant. They can be expressed as CUE scripts or being completely delegated to your custom microservice by implementing the CGP API contract.

- Metric: An evaluation request will compute a numeric measure from an input resource. If any threshold is defined for a metric, the numeric value will be checked against the threshold's range (e.g. between 0 and 5.5).

Both entities are pieces of code, either embedded in Witboost or hosted in a remote location. Since they are code and thus executable, we can also qualify them as Computational, because they are integrated into the software and data development lifecycle.

The primary function of a Governance Entity is then to evaluate resources. Those resources are supplied by Witboost when an evaluation request is sent to a Metric or a Policy.

The evaluation result is then the result of the execution of a Policy or a Metric against one resource. A resource can be anything that can be described by metadata.

It may sound uncommon to read that a metric evaluates a resource, since often metrics are considered just as a measure of a dimension. While this is true also for the Computational Governance Platform, keep in mind that Metrics can define a threshold that if it's violated by an anomaly measure, it will alert the owners of the resource. This is why a metric evaluates a resource, in this context.

You will gain more understanding on resources, types of resources and how they can be evaluated by governance entities through these pages.

What can I check with a Governance Entity?

The lifecycle of a contract follows two phases:

-

Contract: When I am ready to deploy my artifact, the platform through its governance entities requires that a series of capabilities be declared and verifies their completeness, formality, and in some cases also their content. This moment is equivalent to the formal signing of a contract, and if the governance entities are satisfied at this stage, it is equivalent to declaring that the resource will behave exactly as stated.

-

Compliance: Once the resource or artifact is running on the platform, it will be verified that what has been declared corresponds to the actual behaviour.

In the signing of the contract, many things can be verified:

-

Template Completeness: Is the artifact still compliant in terms of informational structure with the original template?

-

Metadata Completeness

- Data Elements: Are all the fields described properly ?

- Business Elements: Are fields connected with the business dictionary ?

- Service Level Agreement: What is the refresh rate or the maximum timeliness we can expect from this data ?

- Data Delivery Protocol: Is it clear which protocol will be used to expose the data ? How to access it ?

- Data Physical serialization: In which form will data be serialized ?

- and more

-

Metadata Correctness: Are the declared metadata relevant to the business glossary or data dictionary?

-

Capability Completeness

- Data Quality: Is Data Quality defined in the contract ? Do we at least x expectations for each data element ?

- PII Discovery: Is the data product declaring PII discovery capabilities or not ?

- Automatic Lineage: Do all the data transformation have embedded lineage configured ?

- Data Masking: Do we have data masking and row filtering policies associated with the data ?

- and more

However, at deploy time, it is not possible to verify whether these declarations actually correspond to what will happen at runtime, but only the fact that the user has been obliged to make these declarations allows us to set quality standards that must be respected from the start and to which the user formally commits

At runtime, the governance entity will not have to do anything but compare the actual behaviour to the expected one. The declared metadata and capabilities automatically become a behaviour on which to apply enforcement.

Behaviour correctness:

- Service-Level Agreement: If a worst-case scenario of 24-hour freshness has been declared, it will be verified that the last refresh never exceeds this threshold

- Data Delivery protocol: If it has been declared that the data will be offered with Parquet serialization via the S3 protocol, it will be verified that the data deposited in storage by the data pipelines is actually structured as declared

- Schema: The data pipeline that generates the data must respect this declaration

- Data Quality: Are all the declared KQIs actually calculated?

- and more

It is of fundamental importance that these governance entities are implemented by the platform team within the platform and integrated with it.

Now let's continue to dive deep on Governance Entities, how can we describe:

- Who is involved in the contract?

- Where does the execution of the governance entity happen?

- When should the policy/metric be executed?

- How should the policy/metric evaluate resources?

- Who triggers the execution of a policy/metric?

- Which resources should the policy/metric evaluate?

Interaction Type (Who is involved in the contract?)

Governance Entities are only applicable where a platform exists because they need to be strengthened and verified in a completely independent way. The execution of the same cannot be handed over to the end user, but must be part of the process. Within a platform there are different forms of rules to be respected: we can refer to them as contracts. As in any ecosystem, we can distinguish two types of "contracts" or Interaction Types:

-

User-to-Platform: between the platform and the users: In every "system" there are rules that must be respected just to be part of it, without these criteria in order there is a risk of being expelled or having to pay penalties of some sort

-

User-to-User: between the users: when a customer-supplier dynamic is established, there are some contractual terms that must be respected and the platform works to ensure that these are respected between the parties.

Context (Where does the execution of the governance entity happen?)

Governance Entities can be further distinguished by the Context, that can be:

- Global: for governance entities that are run at the platform level for all resources

- Local: for governance entities that are run at resource level, i.e. they are run within the context of the resource itself, thus having the ability to act at a lower and more internal level (e.g. inside the resource's infrastructure)

Timing (When is a governance entity executed?)

Witboost allows a governance entity to be executed at every stage of the lifecycle of a data or artifact, you can decide this by setting the Timing property.

The Timing property of a governance entity can be defined as:

-

Deployment: that as the name implies, will execute a policy or a metric during the deployment phase of a resource, but also in its build phase, to give platform users the possibility to test the compliance of their resources before deployment.

-

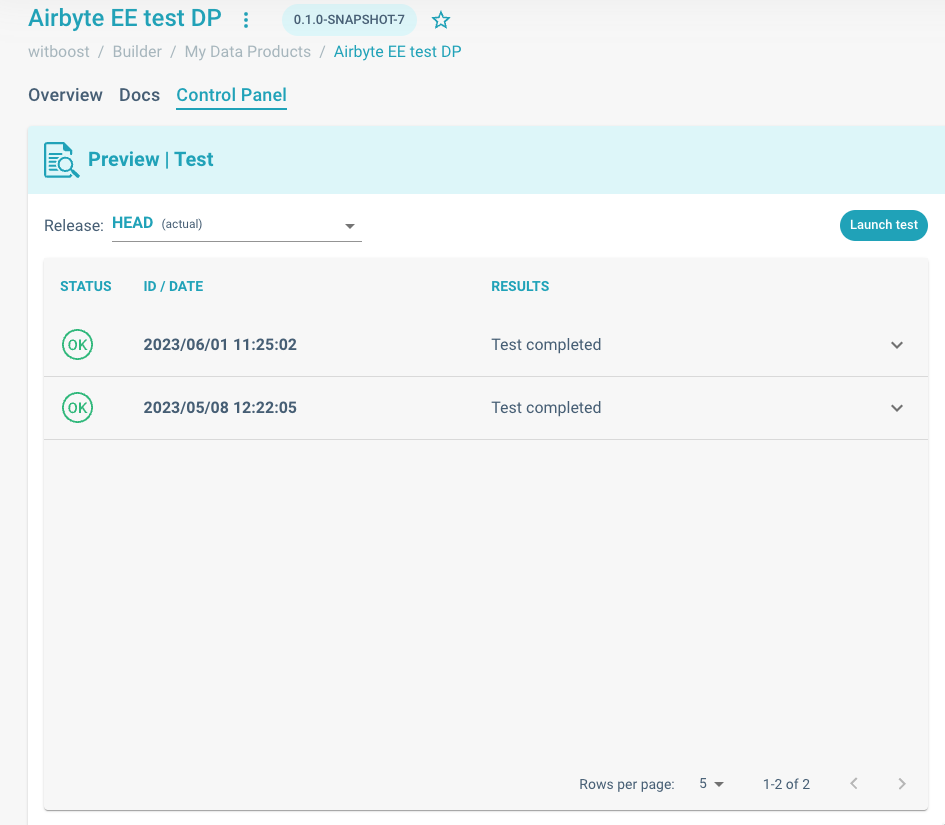

During the build phase: the platform user can test the compliance of their data and artifacts against the policies defined within the platform. This is a self-test done by the user before proceeding to deployment. This test is purely informative for the user.

Builder's control panel. Where you can test a resource against all applicable policies and metrics

-

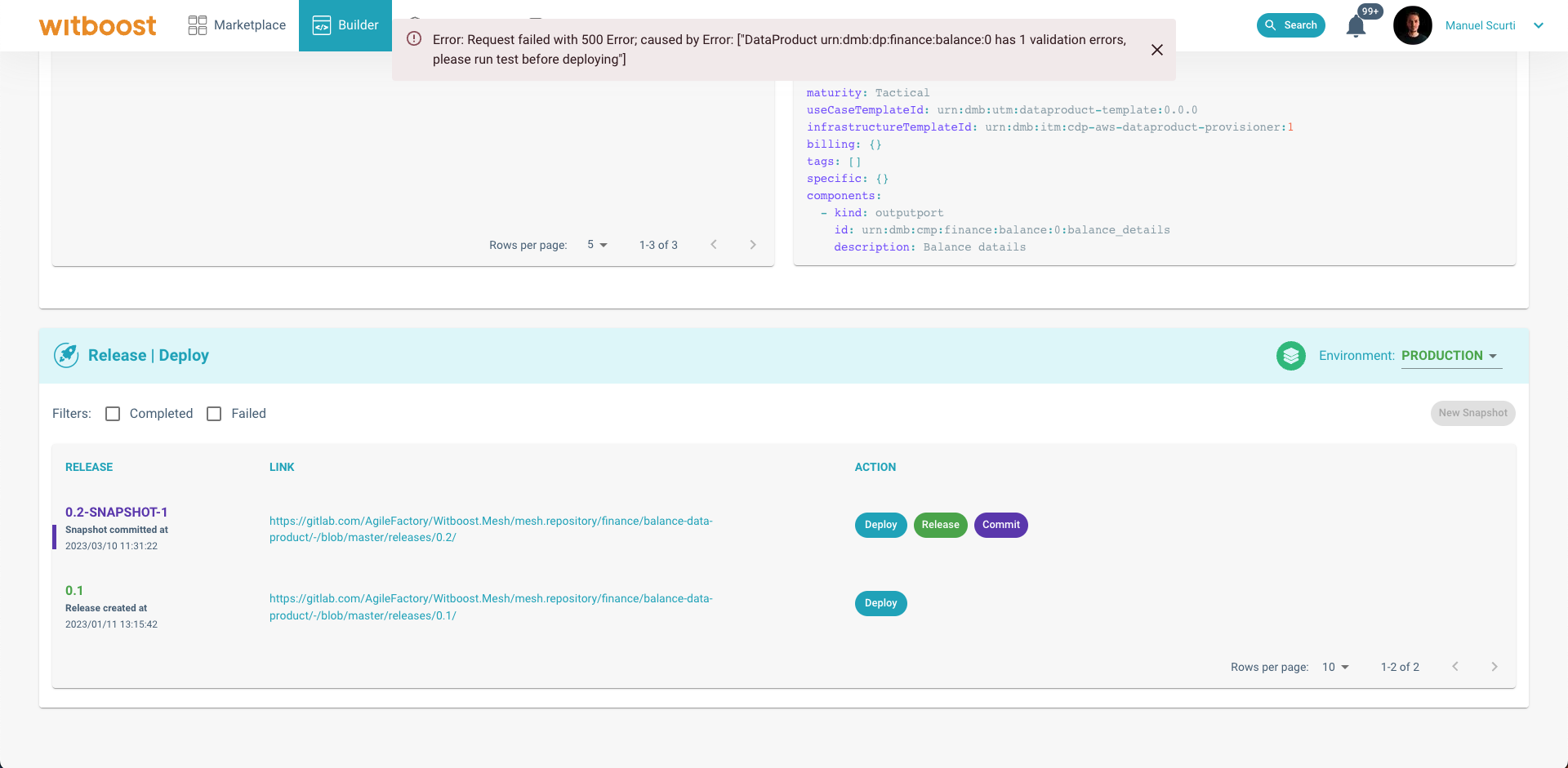

During deployment phase: When the user clicks on deploy, on the other hand, the policy checks become formal and an indispensable precondition to move forward and therefore be part of the platform. In this phase, the platform performs the verification and the associated results are saved, to give everyone assurance that the policies are respected and not bypass-able.

A deployment operation failed due to some policy or metric failing. Testing a resource before deploying helps in troubleshooting validation errors

-

-

Runtime: will execute them during the whole lifecycle of a resource. What does that mean? A policy or metric executed at runtime can follow different strategies:

- Periodic scheduling: for example, every day I check that the data schema is compliant with the one declared in the contract

- Event-based: The policy or metric is executed every time a certain event occurs that could affect compliance with the policy or metric

- Continuous: The policy or metric is executed continuously in the background to check compliance with the contract

Witboost will execute all governance entities that are enabled and which are applicable to the resource.

According to the chosen Context, the available timing options can be different:

- When Context is Global then Timing can be either Deployment or Runtime.

- When Context is Local then Timing can only be Runtime, since they are executed in the context of a resource itself, thus after the resource has been created

Furthermore, if Timing is set to Runtime, you will be able to define a scheduled execution through Cron expression, but only when it is the platform that triggers the execution of the Governance Entity, hence Trigger is set to Active.

Engine and Preprocessing (How should the governance entity evaluate resources?)

Witboost allows you to plug in any implementation of a governance entity, standardizing the concept and behaviour with respect to the platform. There are no technological limitations or imposed requirements in this regard, in compliance with our principles of technology agnosticism. We offer different patterns to evaluate a resource's descriptor. These patterns can be described by the Engine and Resource Preprocessing. The available options for both properties can differ according to type of Governance Entity and the chosen Context.

For both policies and metrics

- Evaluate API: You can implement your own business logic for evaluating a resource's descriptor by implementing the "/evaluate" REST API and by registering it within the platform. These services can be implemented using the preferred language and patterns ( Python, Open Policy Agent, etc.).

Available just for policies

- CUELang: You can define a Policy by leveraging CUELang, which is able to define, generate,

and validate any type of data: configurations, database connections, code, etc.

Witboost offers an integrated service where you can create and modify policies in this language directly in the platform, and then execute them during deployment. All in a highly integrated and fully manageable way from the Computational Governance Platform.

This is summarized by the Engine property that can assume all these values or a subset of them:

- CUE: A Policy with Engine CUE requires a CUE script to be created and managed. This kind of Engine is not available to Metrics.

- Remote: A Governance Entity with Engine Remote, requires a URL to be filled in the wizard, that is the base URL of your service that is implementing the Evaluate API

We can then further describe how you want to transform the input resource before giving it as an input to the engine by specifying a Resource Preprocessing method:

-

Default: The engine (e.g. the Evaluate API endpoint) will receive the resource's descriptor AS IS

-

Previous vs Current: The engine will receive both the current version of the resource's descriptor available within your infrastructure and the resource's descriptor that contains the newest changes you would like to introduce and that you are working on.

This is useful, for instance, to prevent unwanted breaking changes in data contracts and to make the resource evolve in a controlled manner. (See Breaking Change Governance Entities)

When the Trigger is set to Passive, Engine and Resource Preprocessing will default to None, meaning that no Engine, and thus no Resource Preprocessing, will be invoked by the Computational Governance Platform.

All evaluations generated by a Passive Governance Entity are sent directly to the platform at any time. The Computational Governance Platform will act just as an inbox for these results and produce reports and flags accordingly.

Trigger (Who triggers the execution of this governance entity?)

The Trigger property defines who initiates the execution of a policy/metric. This could be an automated system process, a specific event, or a manual trigger from a user. The Trigger setting can significantly affect the quality of resources within Witboost, since it determines the frequency of execution and hence the system's responsiveness to changes.

Trigger can be set to:

-

Active: In this case, the Computational Governance Platform itself initiates the execution of the governance entity. This could be based on certain event triggers or a pre-set schedule, such as daily or after a specific event in a resource's lifecycle. Active triggers are beneficial for enforcing policies and metrics both at run time and deploy time and ensuring resources are consistently in compliance with governance rules.

-

Passive: Execution of the governance entity is triggered externally, and the results are then sent to the platform. This is useful for situations where evaluations need to be performed under specific conditions or based on custom logic not directly integrated into the platform. However, this requires an external system or user to manually submit the evaluation result.

Resource Type (Which resources should the governance entity evaluate?)

We have seen how a Governance Entity can be defined and implemented. Last but not least, we can define which kind of resources the governance entity will evaluate, and this is defined by the Resource Type property.

The Resource Types compatible with the Computational Governance Platform depends on the configured Perimeter Resolvers found in configuration.

As an example, if a Governance Entity's Resource Type is set to Data Product, it means that it will receive only Data Product descriptors resolved by the Perimeter Resolver. In this way, the engine of the Governance Entity will always receive descriptors that it is expecting, and it has been thought to process.

See Resources for more details.